This is a rechargeable MobiWire 3.8V/4.35V 2270mAh/8.63WH 178089412 Battery . High quality and long lifetime, this 178089412 battery lets you work seamlessly when you are on the move for business trips or vacations. All our Li-ion replacement batteries for MobiWire 178089412 Cell Phone have been tested and proven to match and/or exceed original battery performance and are 100% compatible with Original Manufacturer Specifications. Every piece of our replacement battery for MobiWire 178089412 has been tested and validated on MobiWire systems to ensure the safety for your Mobiwire 178089412. Increased capacity extends your workable hours at the office, in meetings or classes, or while traveling.

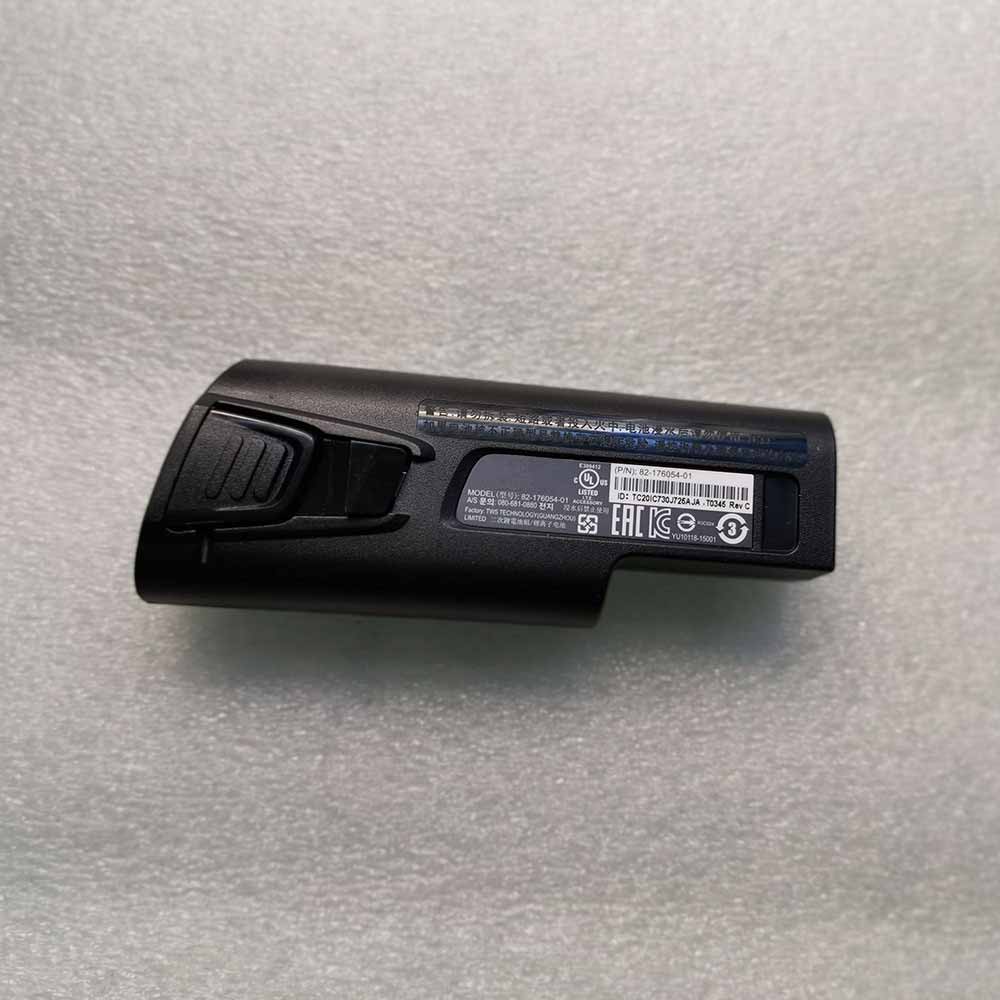

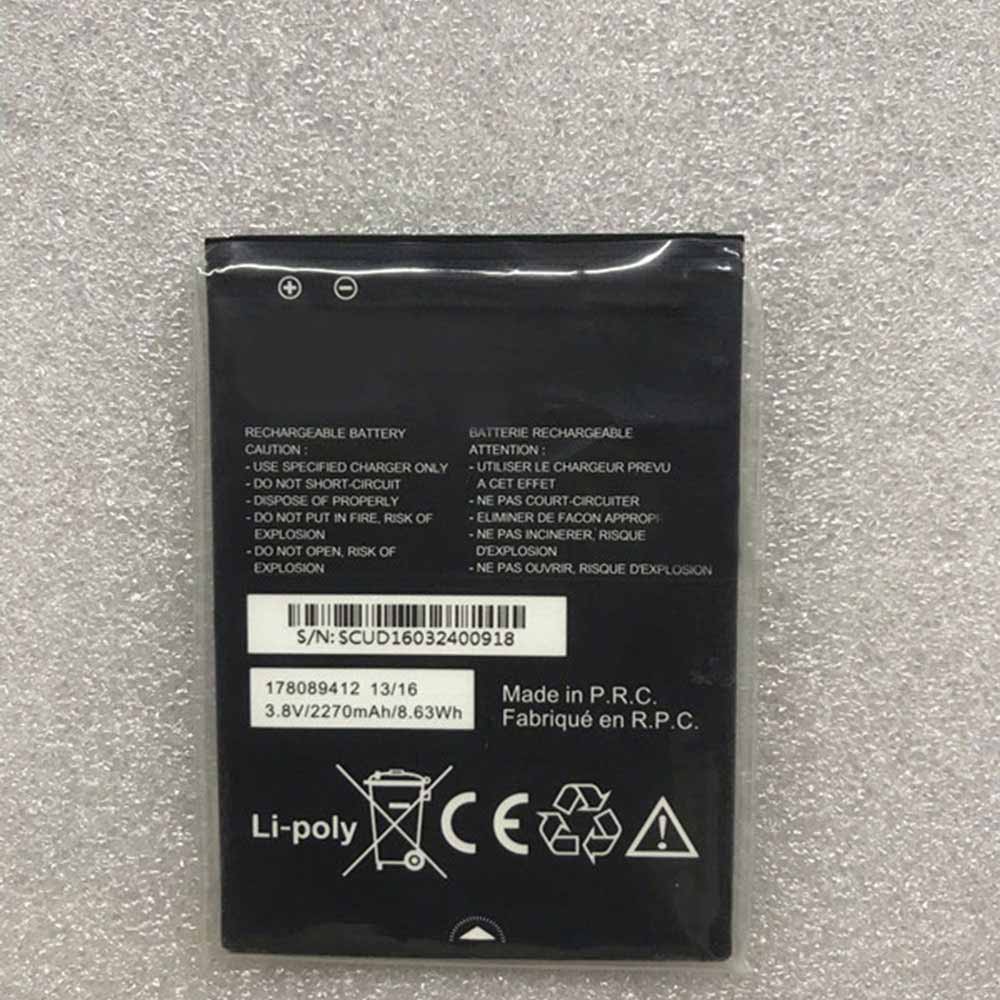

178089412 Replacement MobiWire 178089412 3.8V/4.35V 2270mAh/8.63WH Battery. Buy cheap MobiWire 178089412 Replacement Battery from batteriestore.co.uk now. We have a strict testing process to ensure that your ordered products (like this battery for MobiWire 178089412) are functionally correct before they are packed and sent to you. And before placing an order please make sure that your old original MobiWire 178089412 battery’s shape is same to our batteries’ images as below. Free shipping and one year warranty!

Product Description:

Type :Li-ion

Brand : MobiWire

Voltage : 3.8V/4.35V

Capacity :2270mAh/8.63WH

TOP REASONS TO BUY FROM US

✔ Quality replacement battery with high Capacity

✔ Longer lifespan – thanks to modern Lithium technology without memory effect.

✔ Guaranteed safety: Protected against Short-circuits, Overheating and Overvoltage

✔ Each cell is separately tested in order to ensure professional standards are met.

✔ 100% compatible in replacing your original battery

Compatible Part Numbers:

Compatibile con i seguenti apparecchi :

Mobiwire 178089412

How to care for your 178089412 battery.

This is the Replacement MobiWire 178089412 Battery, You can extend the life of your 178089412 battery significantly through the proper care and handling of your battery.

- Any new 178089412 battery should first be fully charged by leaving it plugged in overnight. Once a computer battery is fully charged it will no longer accept any more power. It is a common practice to leave Cell Phones plugged in after they have been turned off so that the battery has a full charge the next time you use your Cell Phone. It is probably fully charged within 5 hours.

- A brand new 178089412 Cell Phone battery is in a weakened condition since it has not been conditioned yet. Condition and break in your new battery by performing 5 partial discharge and recharge cycles the first week you receive your new battery.

- Run on battery power without the AC adapter cord at least once a week to keep the 178089412 battery cells active and at their peak potential. When your Cell Phone is plugged into the power adapter the battery goes to ‘standby mode’ while your Cell Phone runs directly on electricity from the wall outlet.

- Even though your battery indicates it is fully charged, your battery will return to a weakened state again in a few months if it does not get some exercise through partial discharge and recharge cycles while running on battery power alone.

- If your 178089412 battery has not been actively used 2 months or more, condition your battery again with 5 partial discharge & recharge cycles to increase the batteries capacity and run time.

- Don’t run your battery all the way to empty. Your battery will have a longer service life if it receives shallow discharge and recharge cycles. Research has shown that the useful life of the battery will be much longer if you begin to recharge the battery any time before reaching 20% of the remaining power capacity. Recharging the 178089412 battery even after 10 minutes will not reduce the battery’s life span as lithium batteries do not have the memory effect which was found in older NiCad battery technology. NiCad batteries have never been used in Cell Phones, even the earliest models.

- Never run your battery below the 2% critically low warning point. If your battery is run below this point it may not have enough energy to even recharge. This may effectively ‘kill’ your 178089412 battery.

How can I extend your battery run time?

You can extend your 178089412 battery run time by minimizing the power drain on your battery. A 3 cell battery will run approximately 2 to 2-1/2 hours when the battery is new. Even longer times can be achieved by minimizing the power consumption of your laptop. Here are the top ways to reduce your power consumption.

- Avoid using the CD or DVD drive as the motor consumes a fair amount of power.

- Turn off your wireless finder if you are not using the internet.

- Remove any devices plugged into your USB ports or other ports.

- Close out programs you are not using. By minimizing the load on your CPU, sometimes you can avoid having the cooling fan turn to its high speed mode.

- Increase your memory to 4 Gigabytes. This creates less work for your hard drive. Data is temporarily written to your hard drive when your memory is not sufficient to hold all the data on the many pages you may be accessing.

- Reduce the brightness of your laptop screen. This is the number one drain on your battery by far.